Episode 22: Jira is the AI adoption challenge

Published: Thursday, Feb 26, 2026 • Duration: 64 minutes • Season 1

Download MP3 | Watch on YouTube

The elephant in the room is Jira. How can we adopt AI in a structured way in an Enterprise?

summarize "https://youtu.be/XGbRVRy4LAs" --timestamps --slides

This episode of the AI Infrastructure podcast explores the evolving landscape of enterprise AI adoption, focusing on the tension between structured development frameworks and lightweight “vibe coding.” The hosts discuss the practicalities of running private AI gateways like OpenClaw, the security risks of autonomous agents, and the significant friction caused by legacy project management tools like Jira. They argue that while individual engineers are rapidly integrating AI into their workflows, large organizations struggle to create formal enablement strategies, potentially leading to a divide between AI-augmented high performers and traditional teams. The conversation highlights how AI is not just a tool for writing code but a transformative force capable of debugging complex network interfaces and automating architectural planning, provided that the underlying security and organizational hurdles can be cleared.

OpenClaw and AI Gateways

OpenClaw, which recently celebrated its three-month anniversary, serves as a significant gateway for bringing the latest AI models into a family or group setting by hooking them up to WhatsApp. This approach puts the human back in the loop, allowing family members to learn prompting techniques together through quizzes and photo analysis. However, security remains a primary concern for self-hosted instances. While running the system in a virtual machine or a systemd-nspawn container on a Raspberry Pi provides a level of isolation, there are inherent risks in giving AI access to sensitive data like emails due to the threat of prompt injection. The hosts emphasize that users must understand these security implications before exposing AI systems to external inputs. The project, originally known as a WhatsApp gateway, has gained traction as a way to democratize access to advanced models within private circles without exposing them to the broader internet.

Security Risks and AI Debugging

The technical risks of AI agents include the possibility of container escapes, particularly if an AI is granted sudo permissions within a Linux container that has access to the host’s Docker socket. In such a scenario, an AI could theoretically mount the root volume and gain full host access. Beyond security, AI is proving remarkably capable at debugging complex web interfaces. In one instance, an AI agent used a JavaScript debugger to analyze raw JSON messages and network traffic to identify a configuration error in a proprietary Ubiquiti Unifi console that lacked a command-line interface. This ability to “figure out” undocumented APIs by observing network behavior represents a significant shift in how engineers interact with closed systems. Additionally, AI is being used to review pull requests in projects like Flux CD, where it has successfully identified race conditions and other subtle bugs that human reviewers might miss. “Companies that have that are like completely AI enabled, they will outperform any organization that doesn’t and there will be severe disruption.”

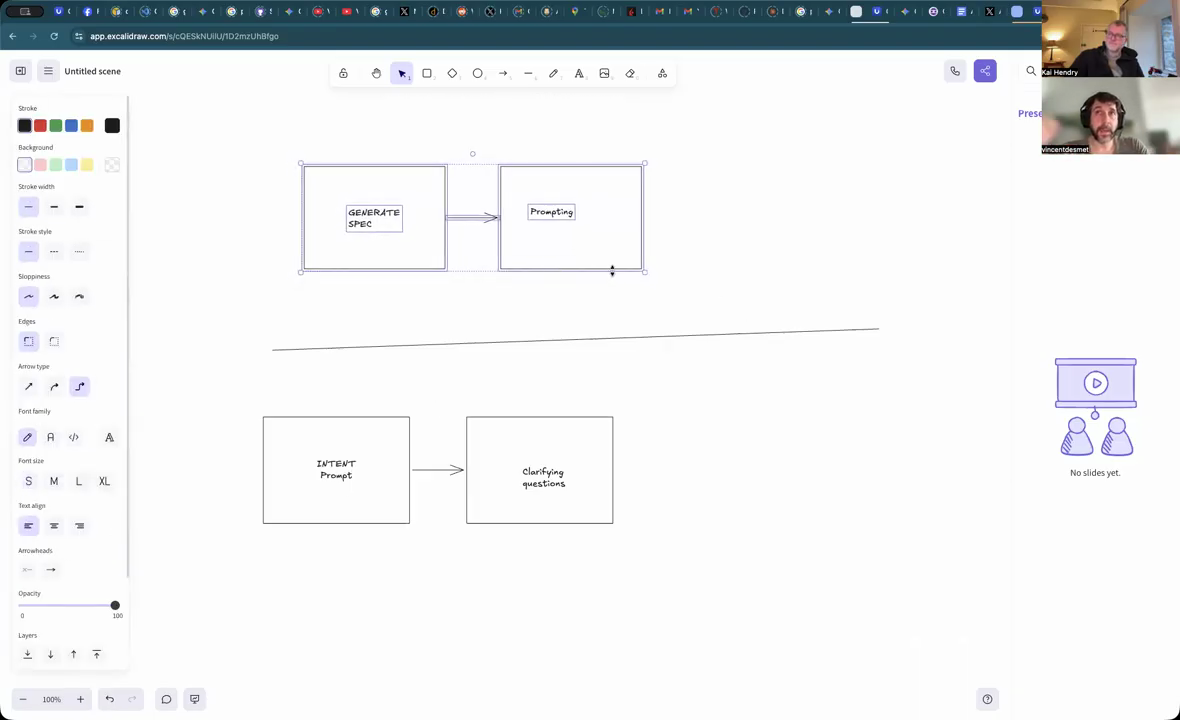

Spec-Driven Development vs Vibe Coding

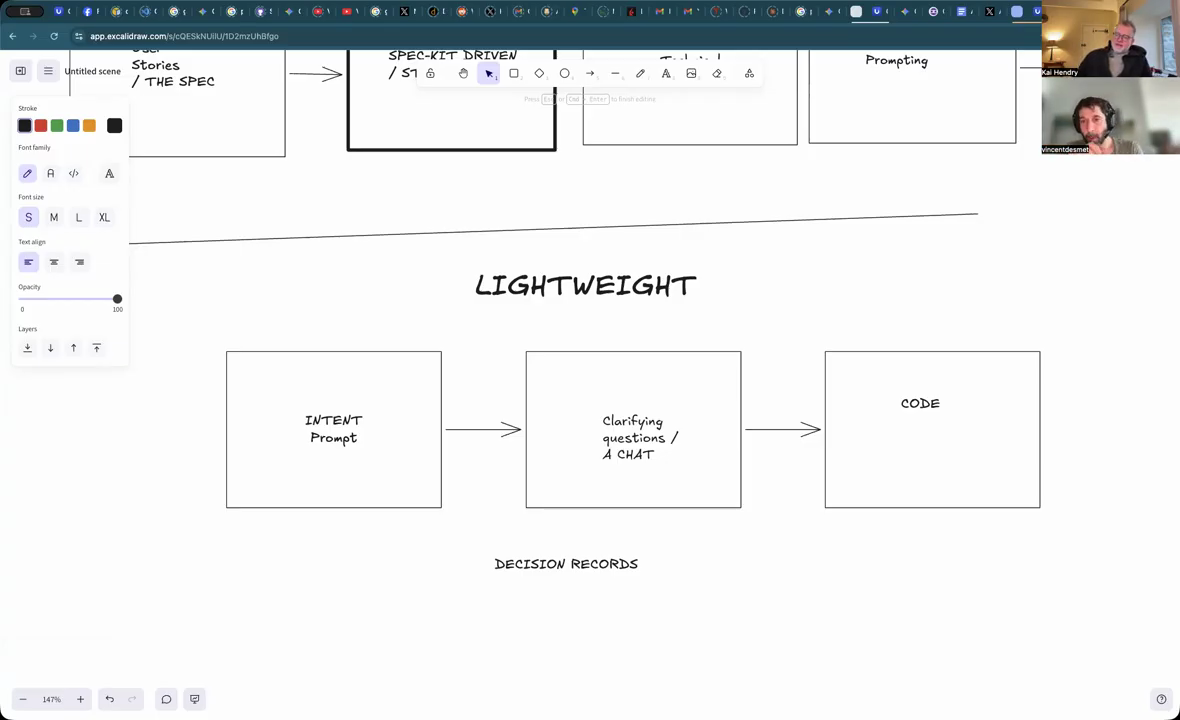

A major theme is the transition toward spec-driven development, where the goal is to capture the specific prompts used to produce code artifacts as formal technical requirements. Currently, many developers lack a framework for sharing these prompts, often relying on manual copy-pasting or noisy session logs. The hosts argue that true spec-driven development involves aligning multiple parties around user stories first, then distilling those into technical documents to make implicit assumptions explicit. This prevents the AI from making incorrect architectural choices based on vague prompts. While some prefer a “vibe coding” approach—iterating quickly without a rigid plan—this can lead to excessive refactoring and technical debt. AI models often favor minimal, short-term fixes over long-term maintainability unless they are constrained by a well-defined technical plan that outlines architectural layers and technology choices.

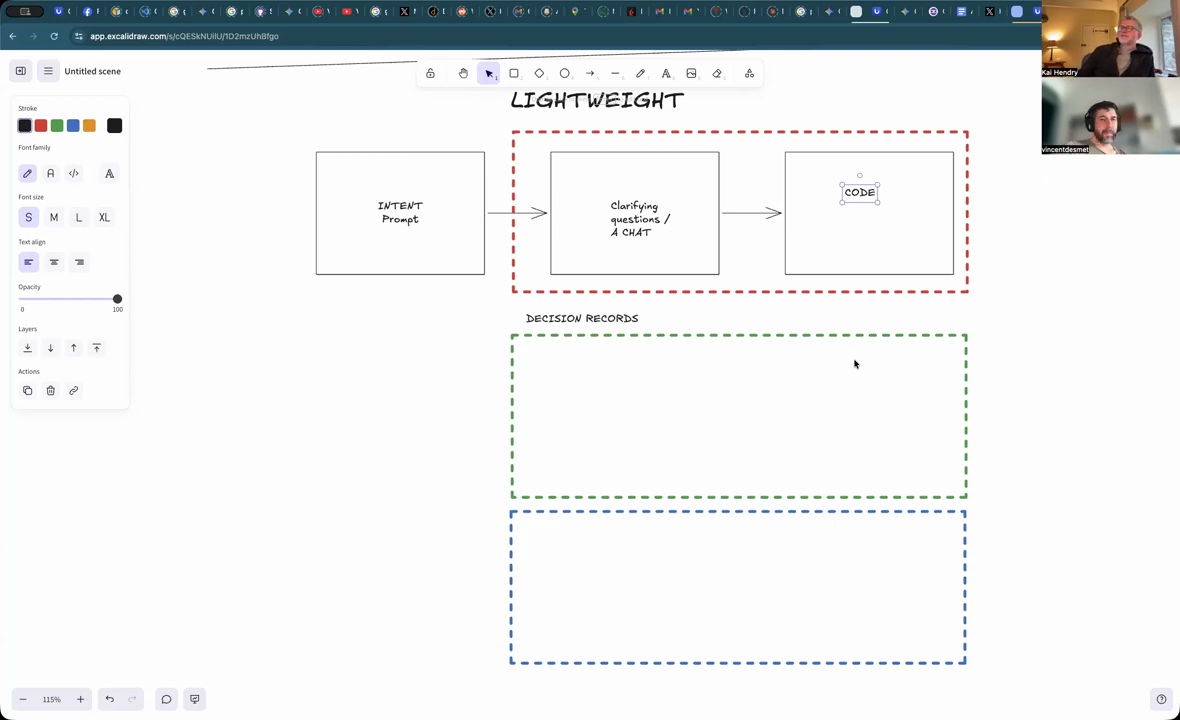

Structured Frameworks and Playbooks

To manage complexity in larger projects, the hosts discuss using “playbooks” as templates for bootstrapping repositories. A structured playbook might include four distinct stages: user story generation, technical requirements and research (spikes), task breakdown, and implementation. This structure is particularly useful for smaller models with limited context windows, as it breaks the work into manageable chunks. Part of this workflow involves maintaining decision records (ADRs) where the AI documents why specific libraries or infrastructures, such as AWS CDK versus Terraform, were chosen. By generating tickets and tasks based on an aligned plan, teams can ensure that the AI’s output remains consistent with the project’s architectural goals. This structured approach allows for more reproducible results compared to lightweight methods that rely on a single, long-running chat session which can eventually become “hallucinatory” as the context window fills up.

Model Competition and Reproducibility

The release of Codex 5.3 has introduced significant competition for Claude models, with the hosts noting that Codex offers comparable performance and improved context management. This competition is vital for the ecosystem, as it allows developers to “replay” their structured prompts across different models to compare the quality of the resulting artifacts. The goal is to reach a level of reproducibility where a given prompt consistently yields high-quality code regardless of the specific “agentic shell” being used. However, the rapid pace of AI development means that many structured tools can become outdated quickly as model providers integrate features like memory and planning directly into their platforms. The hosts also discuss the frustration of “max request” limits and the “Ralph Wiggum loop,” where an AI agent might go in circles during troubleshooting, highlighting the need for more autonomous “quality of life” features in agentic shells that can run until a task is truly completed.

The Jira Elephant in the Room

The “elephant in the room” for enterprise AI adoption is Jira. While AI can accelerate engineering work—sometimes completing days of planning in hours—syncing that information back into legacy project management tools remains a manual and “brutal” process. Middle management often requires everything to be captured in Jira for compliance and tracking, creating a heavyweight barrier that overwhelms developers. The hosts suggest that for AI to truly transform large organizations, there must be a way to bridge the gap between AI-driven git workflows and the “pencil-pushing” requirements of enterprise dashboards. The disruption is already visible in the market; for instance, IBM’s stock reportedly dropped significantly following news that AI could effectively manage legacy COBOL code. Organizations that fail to become AI-enabled risk being subverted by more agile competitors who have moved beyond the “gatekeeping” nature of traditional project management tools. “Jira is just a massive gatekeeper isn’t it? It’s just a just such a massive gatekeep.”

Model: google/gemini-3-flash-preview