Episode 30: Managing context is the challenge

Published: Saturday, Apr 25, 2026 • Duration: 76 minutes • Season 1

Download MP3 | Watch on YouTube

Chapters

00:00 Labor Day and Global Holidays 19:10 AI and the Future of Work 22:05 AI’s Efficacy and Control 24:48 AI’s Role in Development 27:25 Community Feedback and Expectations 30:05 The Impact of AI on Business 32:33 Maintaining AI-Driven Projects 35:11 The Evolution of AI Models 37:57 Navigating AI’s Challenges 40:39 Future of AI and User Experience 45:37 Enhancing Workflow with Spec Logic 47:10 The Role of Adversarial Review Agents 49:46 Balancing Context and Task Management 52:13 Navigating Team Dynamics and Quality Control 55:31 The Tension Between Standardization and Autonomy 59:10 Integrating AI into Development Processes 01:02:07 The Future of Collaboration in AI-Driven Teams 01:05:55 Addressing Quality and Security Concerns 01:11:33 The Challenges of AI Adoption in Enterprises

summarize "https://youtu.be/AmmD-826yvE" --timestamps --slides

This episode (the hosts note it’s episode 30) is a wide-ranging conversation about how teams and platform engineers are adapting to AI: new skills and roles, the risk of sprawl and technical debt, how AI changes workflows (agents, “vibe coding,” adversarial reviews), maintenance and security pitfalls, and where governance, observability and developer platforms fit into an AI-enabled org. Concrete examples include handing work to AI that then finds the real root cause, a claim of “a million lines of code per month” from a vendor demo, a 24/24-task implementation run that took roughly 30–60 minutes, and recurring themes: measurement (DORA-style), ephemeral environments for safe testing, and the tension between grassroots AI adoption and centralized platform standards.

Context switching and platform engineering’s new remit

Hosts open by saying AI forces frequent context switching — “AI requires some spinning of plates” — and that platform engineering must go beyond CI/CD to become an AI context provider and feedback-loop owner, surfacing observability for agents as well as humans, and measuring team effectiveness rather than just reading logs.

Wearing many hats and measuring business value

Both speakers describe hybrid roles (infrastructure lead, security champion, developer) and the pain of juggling responsibilities; organizations still demand measurable business value from platform teams, so long-term strategy must prioritize enabling and governing AI adoption rather than doing one-off cloud migrations or ad hoc platform work.

When AI surprises: good, bad, and detective work

They trade real incidents: handing a problem to an AI assistant that traced misredaction to a Lambda forwarder and Terraform variables the human hadn’t considered, showing the model can both mislead and surface the correct root cause; one host frames the tradeoff as being “handing off my brain” while still needing to validate outputs.

Productivity claims, subsidy risk, and maintenance problems

Speakers push back on vanity metrics (lines of code) and on overreliance on subsidized cloud models: some teams report rapid delivery but later discover codebases they “can’t maintain” because they depend on external models or token-subsidized features that may change, leaving fragility when pricing, rate limits, or capabilities shift.

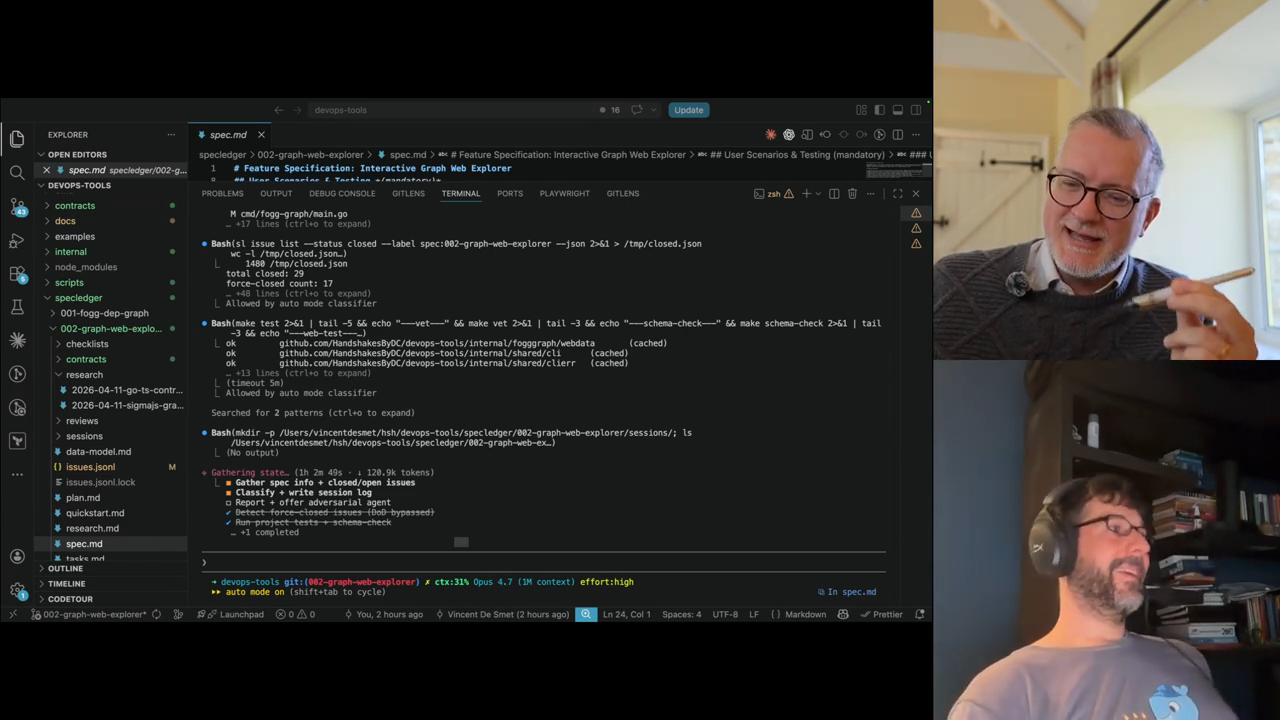

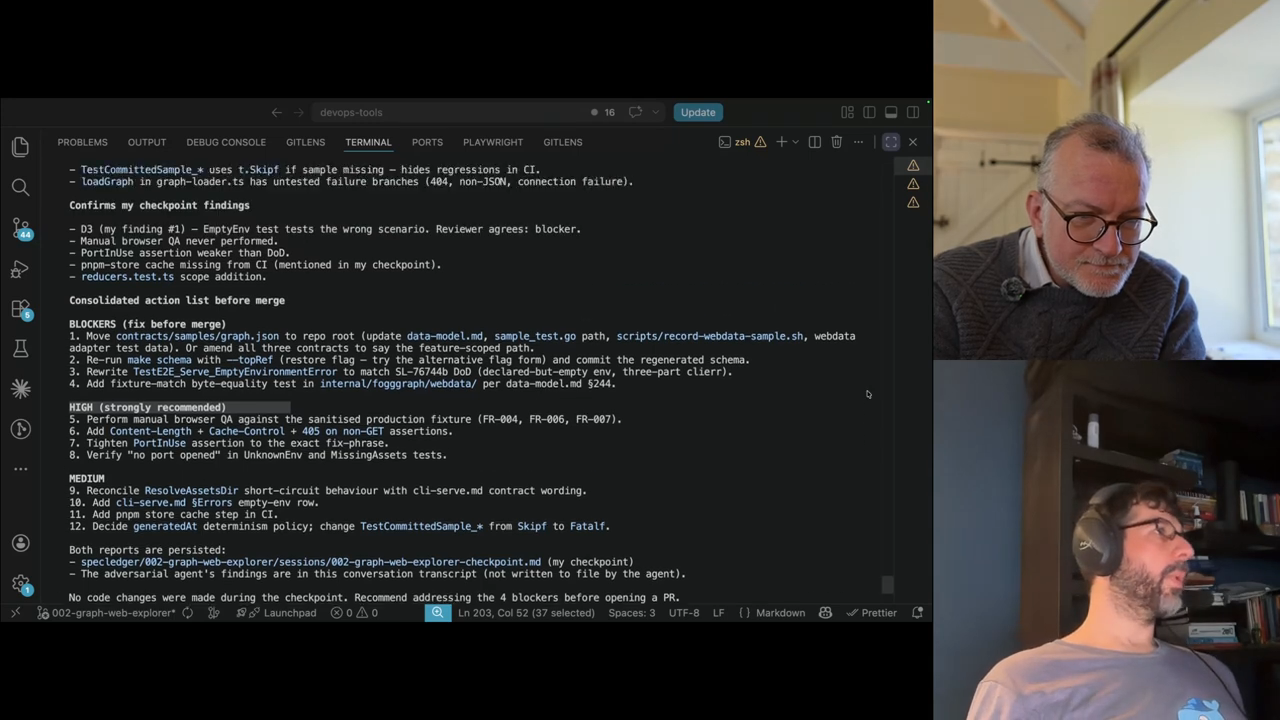

Workflows: vibe coding, spec-ledgers, and adversarial reviews

They describe a practical workflow: write an executable spec/plan, run a long session where an AI implements tasks (one example completed 24 of 24 MVP tasks in ~30–60 minutes), then run an independent adversarial agent in a fresh context to validate against the definition of done; that adversarial review often reopens items, forcing human verification and test automation like Playwright scripts.

Governance, golden paths and platform tooling at scale

Adoption creates uneven quality across teams; the hosts argue for measurement (DORA metrics, test coverage, rollback frequency), internal developer platforms (Backstage + MCPs/agents), and registries for agents and skills, but warn that top-down mandates fail without grassroots buy-in and clear metrics to prove value.

“AI requires some spinning of plates”

Model: openai/gpt-5-mini