Episode 29: Spec Driven Discovery

Published: Friday, Apr 17, 2026 • Duration: 86 minutes • Season 1

Download MP3 | Watch on YouTube

https://github.com/anthropics/claude-quickstarts/blob/main/autonomous-coding/prompts/app_spec.txt

https://dabase.com/blog/2026/ai-engineer-europe/

0:00 - Introduction and Camera Setup 0:11 - Opus 4.7 and Android News 1:06 - Caching and Performance Issues 3:09 - Anthropic API Key Concerns 4:08 - AI Engineer Conference Insights 6:36 - Claude Code Desktop App Features 8:07 - Command-Control App Overview 9:30 - Security and API Key Management 21:27 - Rust Ecosystem and Left-Pad Incident 47:42 - Spec-Driven Development Discussion 78:14 - AI and Project Management

summarize "https://youtu.be/8tdxpecX2B8" --timestamps --slides

A long, wide-ranging conversation that rolls through current model-ops pain points (Anthropic/Claude caching, plan limits and account terminations), context and session management tactics, practical agent tooling (desktop apps, remote control, command-control), security and supply-chain trouble (vendoring, CVE noise, Rust “left-pad” yank), and the tension between spec-driven development and test-driven / iterative workflows. The hosts share examples, concrete numbers, and workflows they actually use: 1‑hour vs 5‑minute cache windows, a 1,000,000‑token context, a 20% threshold (200,000 tokens), five‑hour session budgets, and real debugging patterns like “rewind.” Two short exact excerpts captured in-context: “Don’t correct the model. Don’t say that’s not what I meant. Just go back to your previous prompt” and “I yanked all the versions and he completely archived the repository.”

Morning news and Anthropic drama

Hosts open with recent model-news: Opus 4.6/4.7 chatter, caching changes and community outcry. They explain caching tradeoffs: longer cache (example cited: one hour) reduces compute and cost, while shrinking cache (example cited: five minutes) forces reprocessing and can raise usage and perceived performance problems. Reddit and conference chatter included complaints about degraded model quality and account/API‑key restrictions; one anecdote mentions an account termination tied to third‑party usage. The segment frames context: users feel variable performance, and platform-side changes ripple quickly through heavy users.

Session, context, and agent tactics

Discussion covers context management and the practical limits people enforce: one speaker uses Opus with a 1,000,000‑token context and treats 20% of that (200,000 tokens) as a cutoff to start a fresh session. They debate auto‑compaction vs manual compaction and describe a common operational pattern: when a session derails, “rewind” to a previous prompt instead of correcting the model mid‑conversation — “Don’t correct the model. Don’t say that’s not what I meant. Just go back to your previous prompt”. They also explain “checkpoint” and rewind tooling behaviors and how multi‑session desktop apps and mobile teleport/remote features let you continue work across devices.

Tools, monitoring, and API key hygiene

Hosts survey agent/CLI/desktop tooling: Claude Code desktop improvements (multi‑session terminal, better diffs, scheduling/routines, flash monitor), third‑party utilities (command-control to reconnect to live sessions), and a Codex bar that tracks weekly usage and warns when you’ll hit budget. They flag risks in API routers and multi‑provider managers: tests show some router services exfiltrate keys or perform malicious actions, so you must vet binaries and integrations. At org scale, budgets are often enforced by groups/cohorts and cause token denials across users — a recurring pain in enterprise governance.

Security scans, vendoring, and the human signal problem

A long exchange on security tooling and team workflows: automated scanners produce noisy CVE lists and poor signal; teams need a practical way to accept or document risk per line. The hosts recount a vendoring example where bundling a supplier shell script failed security checks, forcing a workaround (replacing vendored file with a curl during build) that paradoxically reduced transparency. They criticize rigid dashboards and permission flows that push engineers toward insecure shortcuts, and they discuss how security processes often break ownership and incentivize evasive behavior.

Rust “left‑pad” style incident and supply‑chain maintenance

They cover a concrete supply‑chain event in Rust: a maintainer yanked all package versions and archived a repo, which broke downstream projects that lacked lockfiles. Quote from the transcript: “I yanked all the versions and he completely archived the repository.” The hosts unpack community reactions: some defend the yanker’s use of public package‑manager controls, others worry about maintainers being pressured, exploited, or replaced (and the risk that a well‑intentioned handover could become a backdoor). They debate funding/compensation for critical maintainers and the tradeoffs between deprecating problematic packages vs handing them to third parties.

Spec, tests, agents, and real work examples

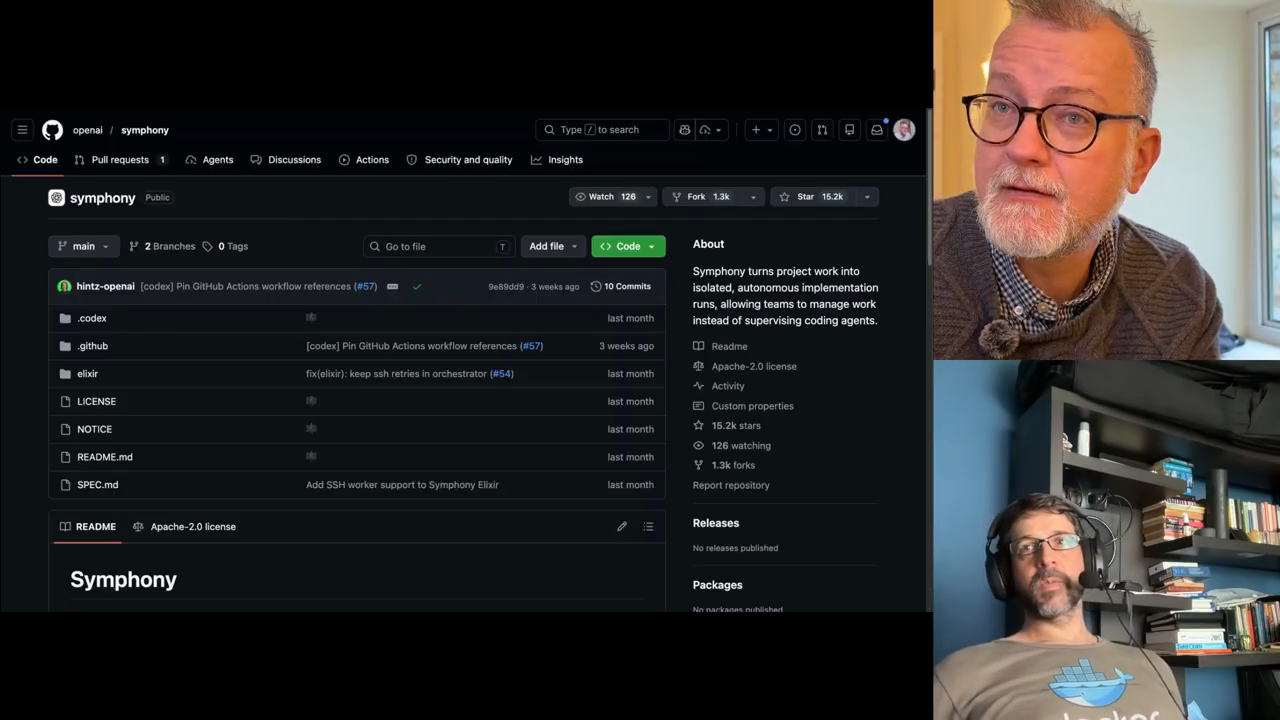

Conversation turns to spec‑driven workflows (Spec Kit, Spec Ledger, OpenSpec, Symphony XML specs) and where they succeed or fail. Key practical points: spec artifacts often become “a graveyard” unless kept in sync with implementation; end‑to‑end tests are the real living verification (the hosts recommend “code → tests” rather than relying on specs alone). They demonstrate an agent success story: Claude co‑work accessed Gmail, found a Belgian embassy message, downloaded PDFs, filled fields from passport photos, and drafted a Dutch reply for a passport appointment — a concrete example of agents completing tedious bureaucratic flows. The segment ends on process and org design: modular monoliths vs microservices, brownfield projects benefit from agents for exploration and automated test harnesses, and spec tools are useful for discovery but shouldn’t replace iterative validation (and may cost nontrivial credits when used for full auto‑builds).

Model: openai/gpt-5-mini